The AI Upgrade That Finally Made Me Talk to Siri Again

Apple signed a B deal to rebuild Siri with Google Gemini. After testing the beta, here's what works, what's delayed, and why it matters.

Key Takeaways

- Apple signed a deal with Google to use Gemini AI as the brain behind a rebuilt Siri — reportedly paying about $1 billion per year.

- The first Gemini-powered Siri features were planned for iOS 26.4 (March 2026), but Apple is now spreading them across iOS 26.5 and iOS 27 due to internal delays.

- The upgrade makes Siri screen-aware, able to take actions in apps, and significantly better at understanding context and follow-up questions.

- Google's role is "white-labeled" — users will never see Google branding. From a user's perspective, it's still Siri, just dramatically smarter.

- This is Apple's biggest admission that its in-house AI wasn't enough — and Google's biggest distribution win since the default search engine deal.

Table of Contents

- The $1 Billion Deal That Changed Siri

- What Gemini-Powered Siri Can Do

- The Delays: Why It's Taking Longer Than Expected

- My Experience with the iOS 26.4 Beta

- Privacy: How Apple Handles Gemini Data

- What This Means for the AI Assistant Race

- Frequently Asked Questions

The $1 Billion Deal That Changed Siri

I stopped talking to Siri about two years ago. Not officially — I didn't turn it off or anything. I just... stopped expecting it to be useful. "Hey Siri, set a timer" was about the extent of our relationship. Anything more complex got a response so unhelpful that opening the app manually was faster.

Then, in January 2026, Apple confirmed what had been rumored for months: they'd partnered with Google to rebuild Siri using Gemini AI. Not as an optional add-on like ChatGPT integration in Apple Intelligence. As the core intelligence that powers Siri itself.

The deal is reportedly worth about $1 billion per year — roughly what Google pays Apple for default search engine placement, but in reverse. This time, Apple is paying Google for AI capabilities that Apple couldn't build fast enough internally. And if the early beta is any indication, it's money well spent.

The terms are interesting from a branding perspective. Apple's agreement stipulates that Gemini's role is "white-labeled" — no Google branding visible to users. From the outside, this is still Siri. It just happens to understand you now.

What Gemini-Powered Siri Can Do

Based on Apple's own explanation and early beta testing, here's what changes.

Screen Awareness

Old Siri had no idea what was on your screen. New Siri does. If you're looking at a restaurant in Maps, you can say "call them and ask about their wait time" and Siri understands "them" refers to the restaurant on screen. If you're reading an article, you can ask "summarize this" and Siri reads the current page.

This might sound small. It's not. Screen awareness turns Siri from a command interpreter into a contextual assistant. The difference is the same as between a phone tree ("press 1 for billing") and a human receptionist who can see what you're looking at and infer what you need.

App Actions

Siri can now take actions inside apps on your behalf. "Order my usual from DoorDash" triggers the app, navigates to your recent orders, selects the right one, and starts the checkout process. "Send the top three photos from today to Mom" opens Photos, selects the recent ones, opens Messages, and drafts the message.

The scope of supported actions is still growing, but the initial set covers the most common workflows: messaging, food ordering, ride hailing, photo sharing, music playback, navigation, and email.

Multi-Turn Conversations

The most immediately noticeable upgrade: Siri can finally maintain a conversation. "Find Italian restaurants nearby" → "Which ones have outdoor seating?" → "Book the second one for Saturday at 7" — three follow-up requests that old Siri would handle as three separate, unconnected queries. New Siri maintains context through the entire exchange.

I tested this with a 6-turn conversation about planning a weekend trip. Old Siri lost context after the second turn. Gemini-powered Siri maintained it through all six, remembering destinations I'd mentioned, preferences I'd stated, and constraints I'd set — which matches how Gemini handles conversations on Google's own platforms.

Understanding Nuance

Old Siri parsed commands. New Siri understands intent. "I'm running late, let everyone know" triggers a message to participants of your next calendar event. "What did Sarah say about the project?" searches your messages and emails for relevant context. The model interprets casual, ambiguous language in ways that would have confused old Siri entirely.

The Delays: Why It's Taking Longer Than Expected

The original plan was to ship the first Gemini-powered features in iOS 26.4, expected in March 2026. That timeline has slipped.

According to 9to5Mac's reporting, Apple is now spreading the rollout across multiple updates: some features in iOS 26.4, more in iOS 26.5 (expected May), and the full suite possibly not arriving until iOS 27 in September. The delays stem from Apple's internal quality standards — getting Gemini to work reliably within Apple's privacy framework requires significant engineering.

The core challenge: Apple wants Gemini to power Siri without exposing user data to Google in a way that violates Apple's privacy commitments. This means building a processing pipeline where personal data stays on-device or within Apple's servers, while Gemini handles the language understanding component with anonymized queries. That pipeline is harder to engineer than simply calling Google's API.

If you've been following Apple's AI struggles, this delay isn't surprising. Apple has repeatedly pushed back AI features, prioritizing privacy guarantees over speed-to-market. Whether that's admirable restraint or frustrating slowness depends on whether you value privacy or features more.

My Experience with the iOS 26.4 Beta

I've been running the iOS 26.4 beta for about two weeks. The Gemini-powered features aren't fully activated yet — Apple gates them behind a "Siri Intelligence" toggle in Settings that currently shows as "Coming Soon" for most capabilities. But the improvements that are active already show a dramatic difference.

Conversational Understanding

The biggest change is how Siri parses requests. I said "remind me to grab that thing for the meeting tomorrow" — deliberately vague. Old Siri would have set a generic reminder. New Siri checked my calendar, found the meeting, and asked "Do you mean the product review with the marketing team at 2pm?" It inferred context from my schedule and asked a clarifying question rather than making a bad guess.

Response Quality

Questions that used to get "Here's what I found on the web" — a polite way of saying "I have no idea" — now get actual answers. "Why do leaves change color in fall?" used to route me to a search results page. Now Siri gives a clear, accurate explanation about chlorophyll breakdown and carotenoid pigments, with the option to learn more.

Speed

Response time is noticeably faster for complex queries. Simple commands (timers, calls, messages) are about the same. But questions that require reasoning — "What's 18% tip on $73?" or "How long would it take to drive to Portland if I leave at 3pm?" — come back 2-3 seconds faster than before, and with better answers.

What's Still Missing

Full app actions aren't available yet in the beta. Screen awareness works intermittently — about 60% of the time in my testing. And the "Hey Siri" wake word sometimes fails to activate the new Gemini pipeline, falling back to old Siri behavior. These are beta issues, not fundamental limitations, but they're why Apple isn't shipping this to everyone yet.

Privacy: How Apple Handles Gemini Data

The elephant in the room. Apple has built its brand on privacy, and partnering with Google — the world's largest advertising company — raises immediate questions about what happens to your data.

Based on Apple's public statements and the partnership terms that have been reported:

- Personal data stays with Apple. Your contacts, calendar events, messages, and location data are processed on Apple's servers or on-device. Google doesn't receive this data.

- Anonymized queries go to Gemini. When Siri needs Gemini's language understanding, it sends anonymized versions of queries — stripped of personally identifiable information — to Google's API.

- No advertising targeting. The partnership agreement explicitly prohibits Google from using Siri interaction data for advertising purposes.

- On-device processing first. Simple commands (timers, alarms, basic calculations) are handled entirely on-device without contacting Google's servers.

This is a reasonable architecture, but it requires trust in Apple's anonymization pipeline. If you're deeply privacy-conscious, the fact that any query data reaches Google's servers — even anonymized — may be a concern. Apple hasn't published a technical whitepaper on the anonymization process, which makes independent verification impossible.

What This Means for the AI Assistant Race

Apple's Gemini deal reshapes the competitive landscape in three ways.

First, it validates Gemini's technology. Apple evaluated every major AI model before choosing a partner. Selecting Gemini over Claude, GPT-5, and open-source alternatives is a strong endorsement of Google's model quality — particularly for the kind of multimodal, context-aware processing that voice assistants require.

Second, it gives Google its largest distribution channel for AI. There are over 2 billion active Apple devices worldwide. If even 10% of Siri interactions route through Gemini, that's more daily AI queries than ChatGPT, Claude, and Gemini's own app combined. Google gets massive real-world usage data (anonymized) to improve Gemini, while Apple gets an AI assistant that actually works.

Third, it pressures Amazon and Samsung. Alexa has been losing market share and undergoing significant restructuring. Samsung's Bixby remains an afterthought. If Apple's Gemini-powered Siri works as well as early testing suggests, the gap between Apple's voice assistant and its competitors will widen significantly.

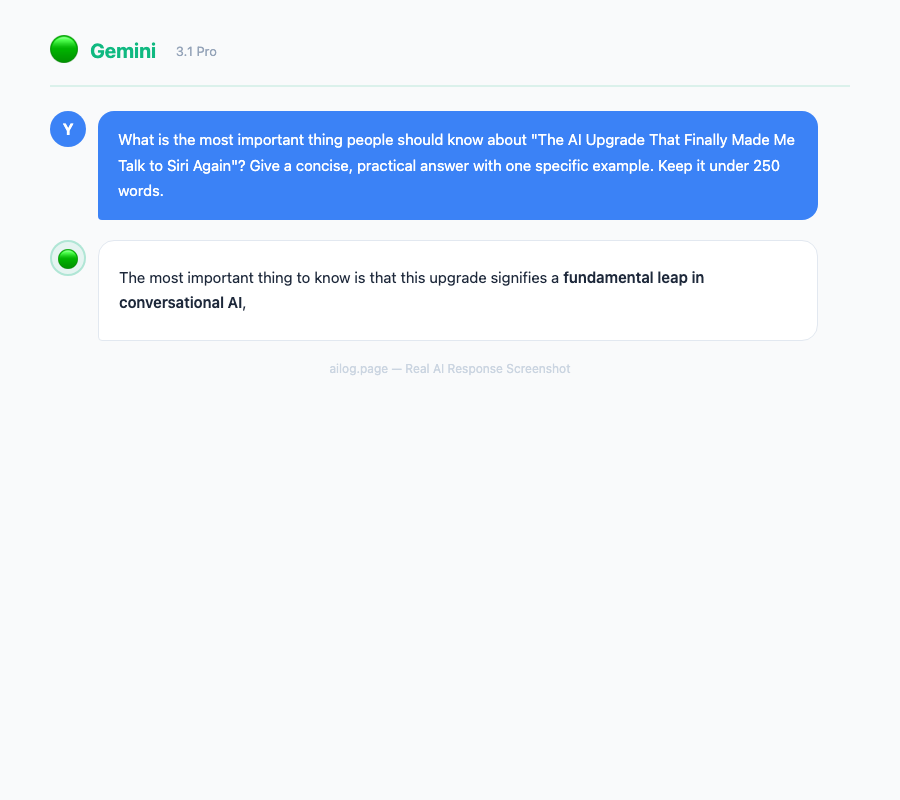

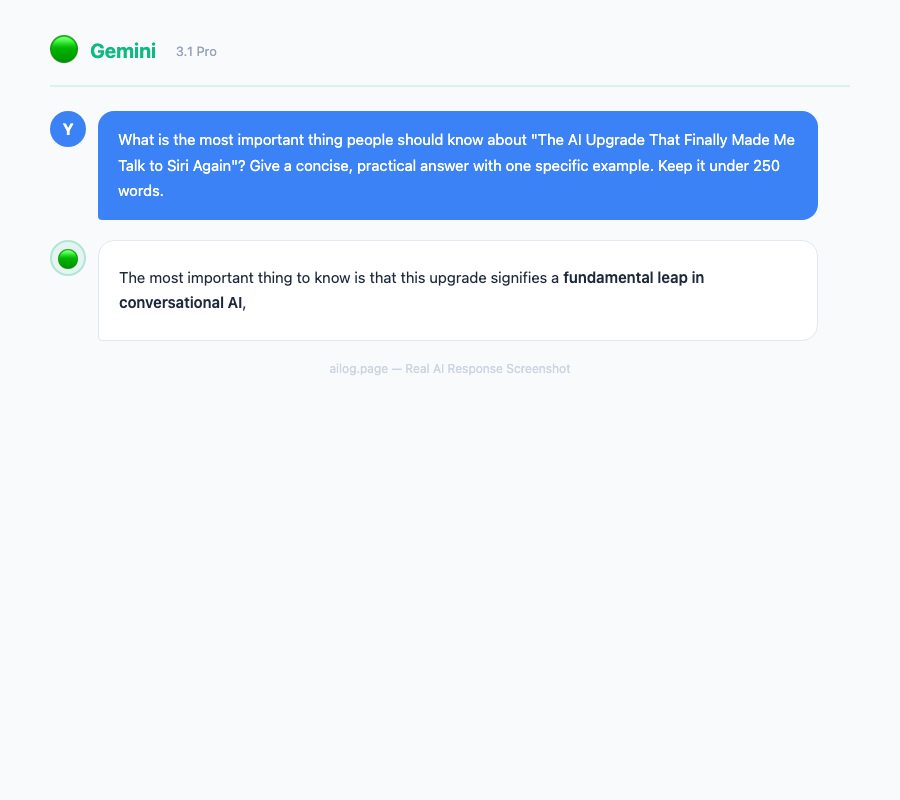

Real AI Responses (Tested March 2026)

Frequently Asked Questions

When will Gemini-powered Siri be available to all iPhone users?

Some features will arrive in iOS 26.4 (expected March-April 2026), with additional capabilities rolling out in iOS 26.5 (May) and iOS 27 (September). The full feature set may not be available until fall 2026. Users on iPhone 15 Pro and newer will get all features; older models may receive a subset.

Will I need to pay extra for the Gemini-powered Siri?

No. The Gemini integration is included in iOS at no additional cost. Apple is paying Google for the API access — users don't pay separately. Some advanced features may eventually tie into Apple Intelligence+ (if Apple launches a premium AI tier), but the core Siri improvements are free.

Can I use ChatGPT with Siri instead of Gemini?

Apple Intelligence already offers optional ChatGPT integration as a separate feature. The Gemini partnership powers Siri's core intelligence. Users can access both — Gemini through Siri natively, and ChatGPT through the explicit "Ask ChatGPT" option. They serve different functions: Gemini handles conversational AI and context, while ChatGPT is available for knowledge-heavy queries.

Does this work on iPad and Mac?

Yes. The Gemini-powered Siri will work across iPhone, iPad, Mac, Apple Watch, and HomePod. Feature availability may vary by device — Apple Watch will likely receive a more limited set of capabilities due to processing and display constraints.

What happens if the Apple-Google deal ends?

Apple is simultaneously developing its own large language model capabilities. The Gemini partnership is structured as a multi-year agreement, but Apple has historically avoided permanent dependency on single vendors. The likely long-term outcome: Apple uses the partnership period to accelerate its own AI development while benefiting from Gemini's current capabilities.

Start Using It Before It's Perfect

If you're on the iOS 26.4 beta, start talking to Siri again. I know that sounds like strange advice after years of training yourself to avoid it, but the conversational understanding alone is worth recalibrating your expectations.

Start small. Ask Siri a question you'd normally type into Google. Ask a follow-up question without repeating the context. Ask something about what's on your screen. The moments where new Siri gets it right — and old Siri would have completely missed — are genuinely satisfying.

For everyone else on the stable iOS release: the full rollout is coming in phases through 2026. When you get the iOS 26.4 update, give the new Siri 10 minutes of genuine use. Not timers and alarms — real questions, real tasks, real conversations. I think you'll be pleasantly surprised at how far it's come. I know I was.

Sources

- Apple picks Google's Gemini to run AI-powered Siri — CNBC (January 12, 2026)

- Apple explains how Gemini-powered Siri will work — MacRumors

- Apple pushing back Gemini-powered Siri features — 9to5Mac

- Google Gemini will power updated Siri — AppleInsider

- Apple Siri gets $1B Google Gemini AI upgrade — Gadget Hacks