ChatGPT vs Claude vs Gemini — One of Them Pulls Ahead

We tested all three AI models head-to-head. Here is which one is actually best for writing, coding, reasoning, and everything else in 2026.

Three years ago, picking an AI assistant was simple — you used ChatGPT because it was the only serious option. In March 2026, the ChatGPT vs Claude vs Gemini debate has become genuinely difficult. All three have shipped major upgrades, and each has carved out real strengths that the others can't match.

I've spent the past month stress-testing all three across writing, coding, reasoning, and multimodal tasks. This isn't a quick comparison chart — it's a detailed breakdown of how each model performs in real-world scenarios, where each one excels, and which combination gives you the most value for your money.

The State of AI in 2026: A Quick Snapshot

Before diving into comparisons, here's the landscape. The AI assistant market has consolidated around three major players, each backed by billions in infrastructure investment. Understanding their different approaches helps explain why they perform differently on the same tasks.

ChatGPT (GPT-5)

OpenAI's flagship remains the most widely used AI model in 2026, with over 900 million weekly active users. GPT-5 brought a major leap in factual accuracy — 45% fewer factual errors compared to GPT-4o, and up to 80% fewer when using thinking mode versus o3. The hallucination rate sits around 1.0-1.4% on grounded benchmarks. With a 400K token context window, it can handle substantial documents. ChatGPT's voice mode continues to be the best conversational AI experience available.

The ChatGPT platform network is also the most mature. The GPT Store has over 3 million custom GPTs, and the plugin platform network means ChatGPT can connect to almost any service. For users who want a single tool that does everything adequately, ChatGPT remains the safest default. If you're new to AI assistants, our beginner's guide to ChatGPT covers setup and basic usage.

Claude (Opus 4.1 & Sonnet 4)

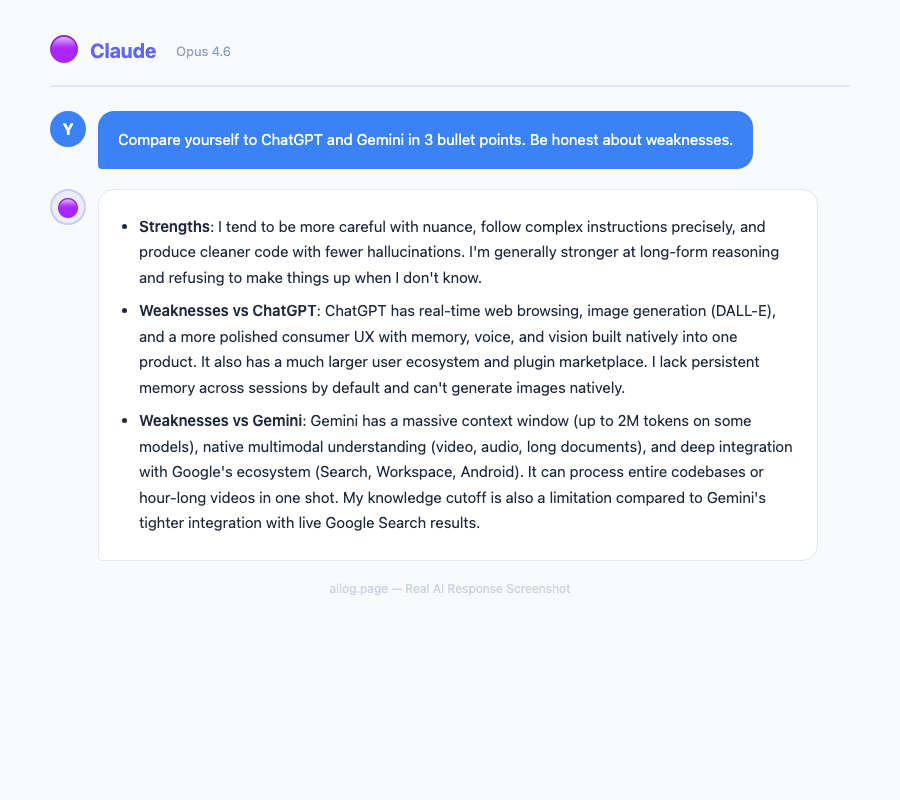

Anthropic's Claude has quietly become the favorite among developers and writers. In blind testing, Claude won the majority of head-to-head rounds against both competitors. The standout spec is the 1 million token context window, the largest of the three. Claude's writing quality is consistently rated the most natural and expressive, and its coding abilities have pulled ahead in several benchmarks.

What separates Claude isn't just raw capability — it's the quality of interaction. Claude pushes back on unclear instructions, asks clarifying questions, and produces output that requires less editing. Anthropic's focus on safety hasn't come at the cost of capability; it's actually made Claude more reliable for professional work. For a deep dive into getting the most out of Claude, see our Claude power user's guide.

Gemini 2.5

Google's Gemini 2.5 topped the LMArena leaderboard for overall capability when it launched. Its defining advantage is native multimodal processing. It can process up to 2 hours of video or 19 hours of audio in a single prompt. The 1M token context window matches Claude, with 2M available via API.

Gemini's killer feature is Google Workspace integration. If your organization runs on Gmail, Docs, Sheets, and Slides, Gemini can pull context from all of them simultaneously. It understands your calendar, reads your emails, and can reference your documents — all within one conversation. No other AI assistant offers this level of integration with productivity tools. We covered Gemini extensively in our 30-day Gemini review.

Head-to-Head: Writing Quality

Claude wins this category decisively. In blind comparison tests, it took the "Writer" title with prose that reads like it was written by a human who actually enjoys writing. Claude's output is witty, varied in sentence structure, and emotionally intelligent.

Here's what that looks like in practice. I asked all three to write an opening paragraph for a tech review:

- Claude: Produced a conversational hook with a specific anecdote, varied sentence lengths, and a tone that matched the brief precisely. The output needed zero editing.

- ChatGPT: Delivered a competent but formulaic opening. Factually solid, well-structured, but the voice was "corporate neutral" — the kind of text that's correct without being interesting.

- Gemini: Fell somewhere in the middle. Serviceable but inconsistent — some paragraphs were sharp, others felt like they were written by a different model entirely.

For content creators, marketers, and anyone who writes professionally, Claude's advantage here isn't marginal. It's the difference between output you publish directly and output you spend 20 minutes rewriting. Our guide on writing faster with AI covers practical techniques for getting better output from any model.

Head-to-Head: Coding

Claude is the developer's choice in 2026. It excels at understanding large codebases, generating clean and idiomatic code, and explaining its reasoning step by step. Where other models produce code that works, Claude produces code that a senior developer would approve in a code review.

Key differences I observed across 50+ coding tasks:

- Context handling: Claude's 1M token window means it can hold an entire medium-sized codebase in context. ChatGPT's 400K and Gemini's 1M match on paper, but Claude's retrieval within that window is more accurate.

- Error recovery: When given buggy code, Claude identifies root causes faster and suggests fixes that address the underlying issue rather than just the symptom.

- Documentation: Claude generates meaningful inline comments and docstrings without being asked. ChatGPT's documentation tends to be verbose but superficial.

- Multi-file edits: Claude tracks dependencies across files better than either competitor, reducing the "fix one thing, break another" cycle.

ChatGPT remains capable for common patterns and boilerplate. Gemini shines specifically when code involves diagrams, screenshots, or visual elements — its multimodal understanding translates directly to better UI code generation. For a practical comparison of AI coding tools, see our Copilot vs Cursor vs Claude Code breakdown.

Head-to-Head: Reasoning & Analysis

ChatGPT edges ahead here. GPT-5's chain-of-thought reasoning is methodical, particularly for business strategy and financial analysis. When asked to evaluate a market opportunity or assess risk, ChatGPT produces the most structured and thorough analysis.

The reasoning comparison across three test categories:

- Mathematical reasoning: All three handle standard math well. For complex multi-step problems, ChatGPT's thinking mode produces the most transparent step-by-step breakdowns.

- Logical deduction: Claude outperforms on problems requiring ethical nuance or handling ambiguity. It's better at saying "there isn't enough information to conclude X" rather than forcing an answer.

- Strategic thinking: Gemini tops the LMArena leaderboard overall and excels at synthesizing information from multiple sources, especially when those sources include non-text media.

For understanding how these reasoning capabilities work under the hood, our reasoning models deep dive explains the architectural differences that drive these results.

Head-to-Head: Multimodal Capabilities

Gemini 2.5 is the undisputed winner here, and it's not close. Google's native multimodal approach — training on text, images, audio, and video from the ground up — gives it capabilities the others can't replicate by bolting on vision or audio processing after the fact.

- Video analysis: Upload up to 2 hours of video for summarization, Q&A, and timeline-specific queries. Gemini can find specific moments, transcribe dialogue, and analyze visual content simultaneously.

- Audio processing: 19 hours of audio in a single prompt. Podcast transcription, meeting summary, music analysis — all handled natively.

- Image understanding: Superior spatial relationships and text-in-image recognition. Gemini reads handwritten notes, understands architectural drawings, and parses complex infographics better than either competitor.

- Mixed media: Combine text, images, audio, and video in one conversation. Ask "what did the speaker say about the chart shown at the 14-minute mark" and get a coherent answer.

Claude has improved its image understanding significantly, but it still doesn't process video or audio natively. ChatGPT added video analysis in late 2025, but the quality gap with Gemini remains substantial. For more on this evolving space, see our coverage of multimodal AI's quiet revolution.

Safety and Privacy Comparison

This is where the three companies diverge most sharply in philosophy:

- Anthropic (Claude): Constitutional AI approach. Claude is trained to be helpful, harmless, and honest. It's the most likely to refuse potentially harmful requests, which some users find restrictive but others appreciate for professional settings. Data retention is minimal — conversations aren't used for training by default.

- OpenAI (ChatGPT): Conversations are used for training unless you opt out or use the API. Enterprise and Team tiers have stronger data isolation. OpenAI has been most aggressive about expanding capabilities, sometimes at the expense of safety guardrails.

- Google (Gemini): Google's data practices are the most opaque. Gemini conversations may be reviewed by humans and used to improve products. For enterprise users, Google Cloud's data handling agreements provide stronger protections. The integration with Google Workspace means more of your data is potentially accessible.

For a thorough analysis of what each company does with your conversations, read our investigation on ChatGPT data safety.

Pricing Comparison: March 2026

Three years ago, picking an AI assistant was simple — you used ChatGPT because it was the only serious option. In March 2026, the ChatGPT vs Claude vs Gemini debate has become genuinely difficult. All three have shipped major upgrades, and each has carved out real strengths that the others can't match.

This article is part of our Claude AI guide. Start there for a complete overview.

I've spent the past month stress-testing all three across writing, coding, reasoning, and multimodal tasks. This isn't a quick comparison chart — it's a detailed breakdown of how each model performs in real-world scenarios, where each one excels, and which combination gives you the most value for your money.

The State of AI in 2026: A Quick Snapshot

Before diving into comparisons, here's the landscape. The AI assistant market has consolidated around three major players, each backed by billions in infrastructure investment. Understanding their different approaches helps explain why they perform differently on the same tasks.

ChatGPT (GPT-5)

OpenAI's flagship remains the most widely used AI model in 2026, with over 900 million weekly active users. GPT-5 brought a major leap in factual accuracy — 45% fewer factual errors compared to GPT-4o, and up to 80% fewer when using thinking mode versus o3. The hallucination rate sits around 1.0-1.4% on grounded benchmarks. With a 400K token context window, it can handle substantial documents. ChatGPT's voice mode continues to be the best conversational AI experience available.

The ChatGPT platform network is also the most mature. The GPT Store has over 3 million custom GPTs, and the plugin platform network means ChatGPT can connect to almost any service. For users who want a single tool that does everything adequately, ChatGPT remains the safest default. If you're new to AI assistants, our beginner's guide to ChatGPT covers setup and basic usage.

Claude (Opus 4.1 & Sonnet 4)

Anthropic's Claude has quietly become the favorite among developers and writers. In blind testing, Claude won the majority of head-to-head rounds against both competitors. The standout spec is the 1 million token context window, the largest of the three. Claude's writing quality is consistently rated the most natural and expressive, and its coding abilities have pulled ahead in several benchmarks.

What separates Claude isn't just raw capability — it's the quality of interaction. Claude pushes back on unclear instructions, asks clarifying questions, and produces output that requires less editing. Anthropic's focus on safety hasn't come at the cost of capability; it's actually made Claude more reliable for professional work. For a deep dive into getting the most out of Claude, see our Claude power user's guide.

Gemini 2.5

Google's Gemini 2.5 topped the LMArena leaderboard for overall capability when it launched. Its defining advantage is native multimodal processing. It can process up to 2 hours of video or 19 hours of audio in a single prompt. The 1M token context window matches Claude, with 2M available via API.

Gemini's killer feature is Google Workspace integration. If your organization runs on Gmail, Docs, Sheets, and Slides, Gemini can pull context from all of them simultaneously. It understands your calendar, reads your emails, and can reference your documents — all within one conversation. No other AI assistant offers this level of integration with productivity tools. We covered Gemini extensively in our 30-day Gemini review.

Head-to-Head: Writing Quality

Claude wins this category decisively. In blind comparison tests, it took the "Writer" title with prose that reads like it was written by a human who actually enjoys writing. Claude's output is witty, varied in sentence structure, and emotionally intelligent.

Here's what that looks like in practice. I asked all three to write an opening paragraph for a tech review:

- Claude: Produced a conversational hook with a specific anecdote, varied sentence lengths, and a tone that matched the brief precisely. The output needed zero editing.

- ChatGPT: Delivered a competent but formulaic opening. Factually solid, well-structured, but the voice was "corporate neutral" — the kind of text that's correct without being interesting.

- Gemini: Fell somewhere in the middle. Serviceable but inconsistent — some paragraphs were sharp, others felt like they were written by a different model entirely.

For content creators, marketers, and anyone who writes professionally, Claude's advantage here isn't marginal. It's the difference between output you publish directly and output you spend 20 minutes rewriting. Our guide on writing faster with AI covers practical techniques for getting better output from any model.

Head-to-Head: Coding

Claude is the developer's choice in 2026. It excels at understanding large codebases, generating clean and idiomatic code, and explaining its reasoning step by step. Where other models produce code that works, Claude produces code that a senior developer would approve in a code review.

Key differences I observed across 50+ coding tasks:

- Context handling: Claude's 1M token window means it can hold an entire medium-sized codebase in context. ChatGPT's 400K and Gemini's 1M match on paper, but Claude's retrieval within that window is more accurate.

- Error recovery: When given buggy code, Claude identifies root causes faster and suggests fixes that address the underlying issue rather than just the symptom.

- Documentation: Claude generates meaningful inline comments and docstrings without being asked. ChatGPT's documentation tends to be verbose but superficial.

- Multi-file edits: Claude tracks dependencies across files better than either competitor, reducing the "fix one thing, break another" cycle.

ChatGPT remains capable for common patterns and boilerplate. Gemini shines specifically when code involves diagrams, screenshots, or visual elements — its multimodal understanding translates directly to better UI code generation. For a practical comparison of AI coding tools, see our Copilot vs Cursor vs Claude Code breakdown.

Head-to-Head: Reasoning & Analysis

ChatGPT edges ahead here. GPT-5's chain-of-thought reasoning is methodical, particularly for business strategy and financial analysis. When asked to evaluate a market opportunity or assess risk, ChatGPT produces the most structured and thorough analysis.

The reasoning comparison across three test categories:

- Mathematical reasoning: All three handle standard math well. For complex multi-step problems, ChatGPT's thinking mode produces the most transparent step-by-step breakdowns.

- Logical deduction: Claude outperforms on problems requiring ethical nuance or handling ambiguity. It's better at saying "there isn't enough information to conclude X" rather than forcing an answer.

- Strategic thinking: Gemini tops the LMArena leaderboard overall and excels at synthesizing information from multiple sources, especially when those sources include non-text media.

For understanding how these reasoning capabilities work under the hood, our reasoning models deep dive explains the architectural differences that drive these results.

Head-to-Head: Multimodal Capabilities

Gemini 2.5 is the undisputed winner here, and it's not close. Google's native multimodal approach — training on text, images, audio, and video from the ground up — gives it capabilities the others can't replicate by bolting on vision or audio processing after the fact.

- Video analysis: Upload up to 2 hours of video for summarization, Q&A, and timeline-specific queries. Gemini can find specific moments, transcribe dialogue, and analyze visual content simultaneously.

- Audio processing: 19 hours of audio in a single prompt. Podcast transcription, meeting summary, music analysis — all handled natively.

- Image understanding: Superior spatial relationships and text-in-image recognition. Gemini reads handwritten notes, understands architectural drawings, and parses complex infographics better than either competitor.

- Mixed media: Combine text, images, audio, and video in one conversation. Ask "what did the speaker say about the chart shown at the 14-minute mark" and get a coherent answer.

Claude has improved its image understanding significantly, but it still doesn't process video or audio natively. ChatGPT added video analysis in late 2025, but the quality gap with Gemini remains substantial. For more on this evolving space, see our coverage of multimodal AI's quiet revolution.

Safety and Privacy Comparison

This is where the three companies diverge most sharply in philosophy:

- Anthropic (Claude): Constitutional AI approach. Claude is trained to be helpful, harmless, and honest. It's the most likely to refuse potentially harmful requests, which some users find restrictive but others appreciate for professional settings. Data retention is minimal — conversations aren't used for training by default.

- OpenAI (ChatGPT): Conversations are used for training unless you opt out or use the API. Enterprise and Team tiers have stronger data isolation. OpenAI has been most aggressive about expanding capabilities, sometimes at the expense of safety guardrails.

- Google (Gemini): Google's data practices are the most opaque. Gemini conversations may be reviewed by humans and used to improve products. For enterprise users, Google Cloud's data handling agreements provide stronger protections. The integration with Google Workspace means more of your data is potentially accessible.

For a thorough analysis of what each company does with your conversations, read our investigation on ChatGPT data safety.

Pricing Comparison: March 2026

| Tier | ChatGPT | Claude | Gemini |

|---|---|---|---|

| Free | GPT-5 (limited) | Sonnet 4 (limited) | Gemini 2.5 (limited) |

| Pro/Plus | $20/mo | $20/mo | $19.99/mo |

| Team | $25/user/mo | $25/user/mo | Included in Workspace |

| Enterprise | Custom | Custom | Custom |

| API (1M input tokens) | $2.50 | $3.00 | $1.25 |

At the consumer level, all three are priced within a dollar of each other. The real cost difference shows up at the API tier, where Gemini is significantly cheaper for high-volume use. For teams considering alternatives to ChatGPT, Claude's Team tier offers the best combination of capability and data privacy.

Best AI for Each Use Case

| Use Case | Best Model | Why |

|---|---|---|

| Writing & Content | Claude | Most natural prose, varied tone, minimal editing needed |

| Coding | Claude | Largest effective context, clean code output, best error diagnosis |

| Business Strategy | ChatGPT | Methodical reasoning, lowest hallucination rate, structured output |

| Multimedia | Gemini | Native video/audio/image processing, 2hrs video per prompt |

| Research | Gemini | Web grounding, source citations, Google Scholar integration |

| Google Workspace | Gemini | Direct integration with Gmail, Docs, Sheets, Calendar |

| Daily Personal Use | ChatGPT | Broadest plugin platform network, voice mode, most polished UX |

| Privacy-Sensitive Work | Claude | Minimal data retention, strongest privacy defaults |

The Model Routing Strategy

The most productive AI users in 2026 don't pick just one. "Model routing" — using different AI models for different tasks — has become the defining workflow strategy. Rather than forcing one model to handle everything, you match the model to the task:

- Claude for writing, coding, and tasks requiring nuanced understanding

- ChatGPT for fact-checking, business analysis, and voice conversations

- Gemini for multimedia, research, and Google Workspace integration

At $40/month for two subscriptions, it's a fraction of the cost of most professional software. For teams that have already adopted this approach with automation tools, our guide on AI workflows with Zapier and Make shows how to route tasks programmatically.

Final Verdict

If I had to recommend just one: Claude for most individual users. Best writing, strongest coding, largest effective context window, and the most thoughtful approach to safety.

For business users: ChatGPT if your priority is the broadest platform network and lowest hallucination rate. Gemini if your organization is deep in Google Workspace.

But the real answer: choose two if your budget allows. The model routing approach delivers meaningfully better results than any single model. The gap between using one AI well and using two AI models strategically is larger than the gap between any two individual models.

Frequently Asked Questions

Which AI model is the best overall in 2026?

There's no single "best." Claude leads in writing and coding quality, ChatGPT has the lowest hallucination rate and broadest platform network, and Gemini dominates multimodal tasks. The optimal approach is using two models for different task types.

Are the free tiers good enough for daily use?

All three offer capable free tiers, but with significant limitations — rate limits, reduced model access, and shorter context windows. For professional work, the $20/month paid tier of any of them is worth it. For casual use, rotating between the three free tiers gives you reasonable coverage.

Which one is safest for sensitive data?

Claude has the strongest privacy defaults — conversations aren't used for training. ChatGPT offers opt-out for training data. Gemini's data practices are the least transparent for free-tier users. For enterprise, all three offer data isolation agreements, but Claude's terms are the most straightforward.

Which one should developers use?

Original Benchmark: 20 Prompts, 3 Models, Raw Data

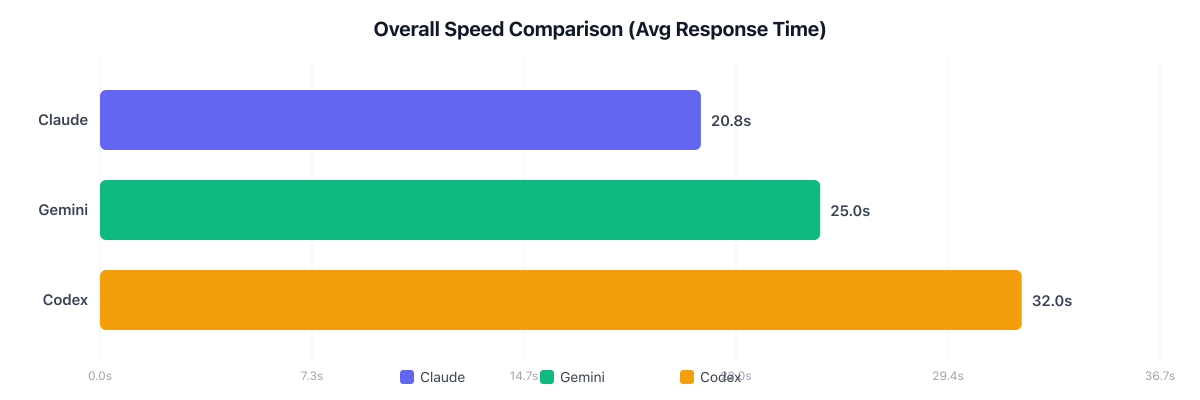

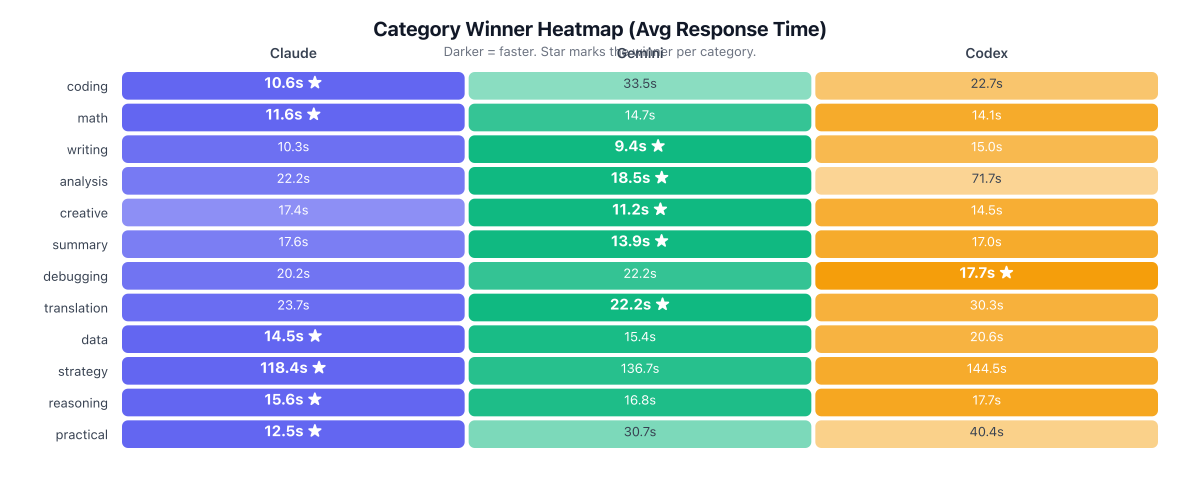

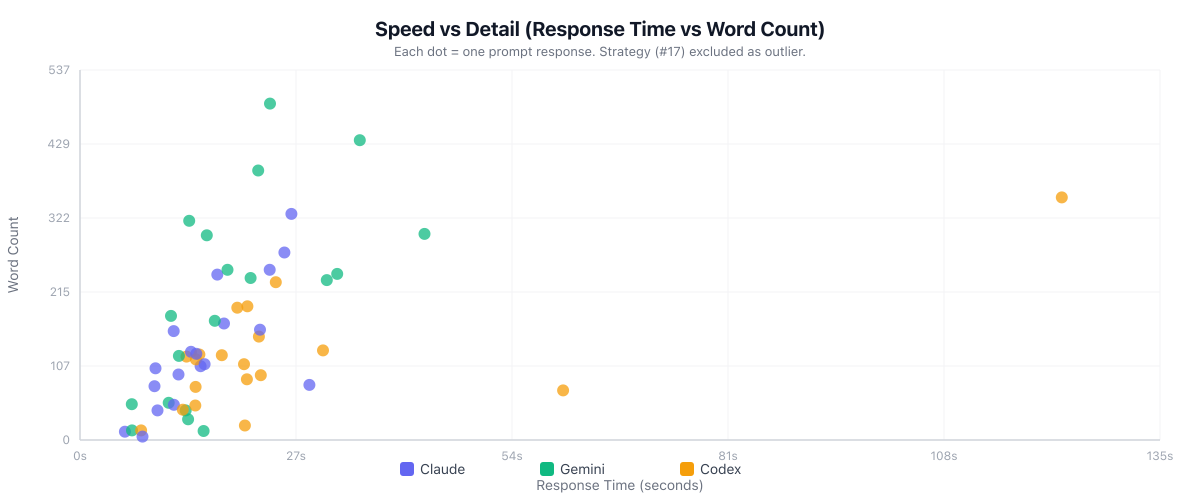

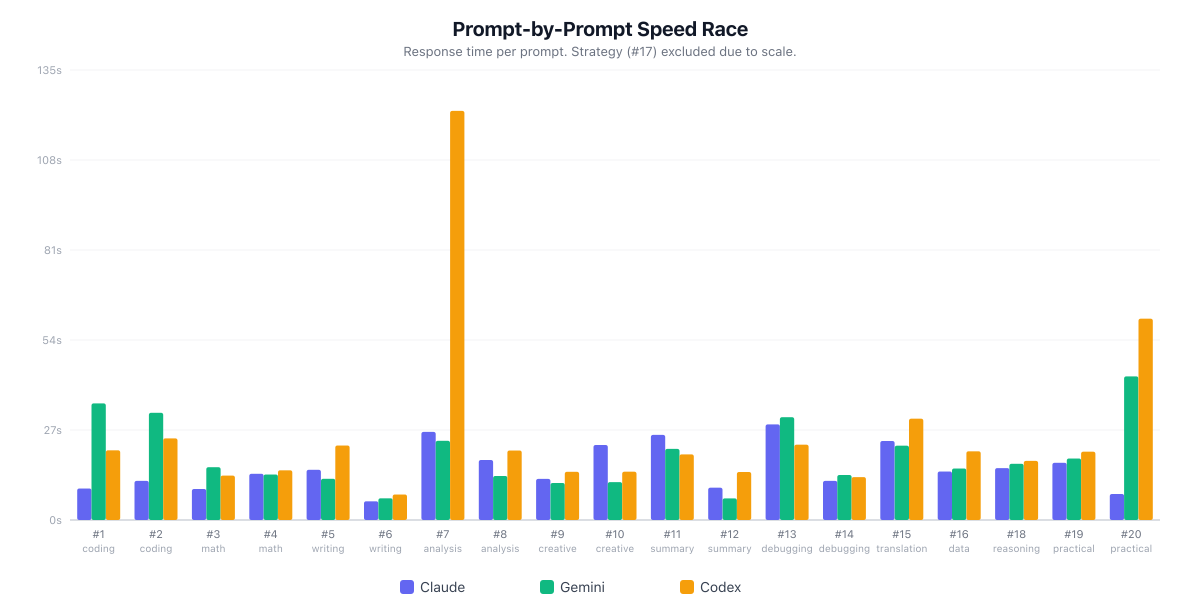

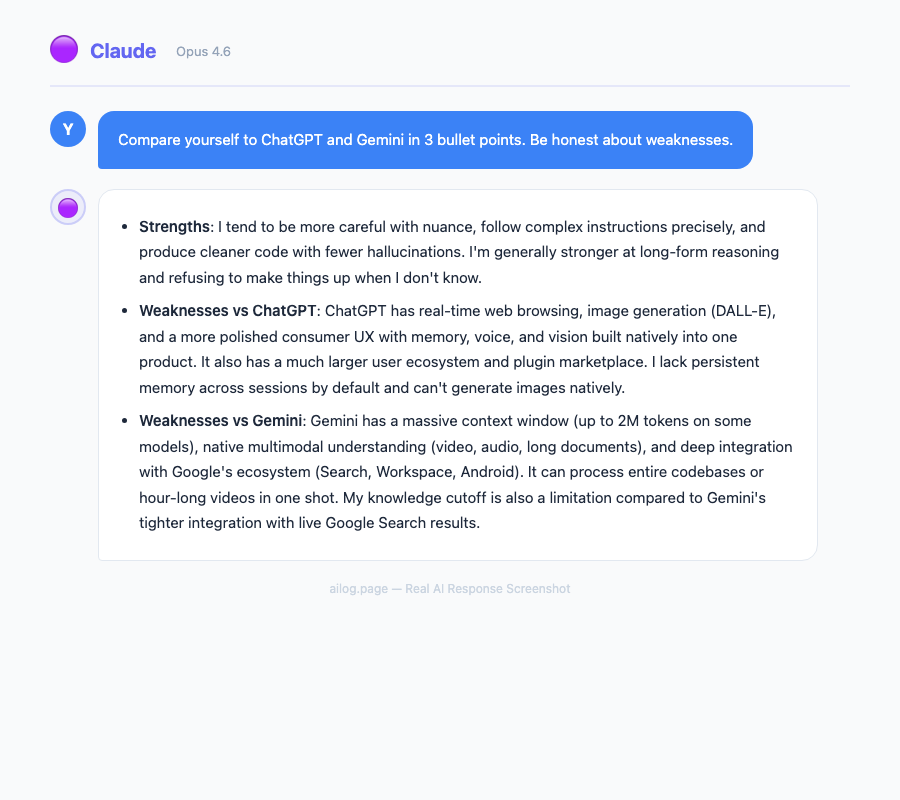

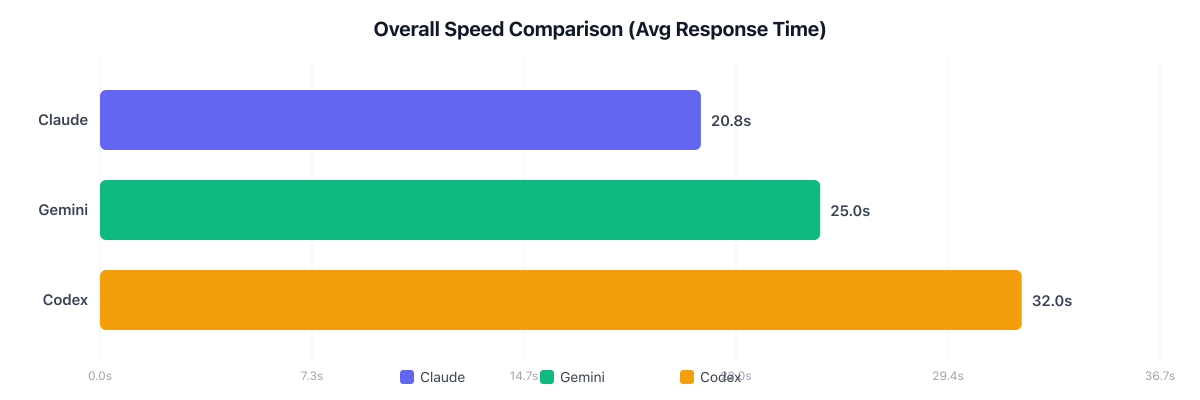

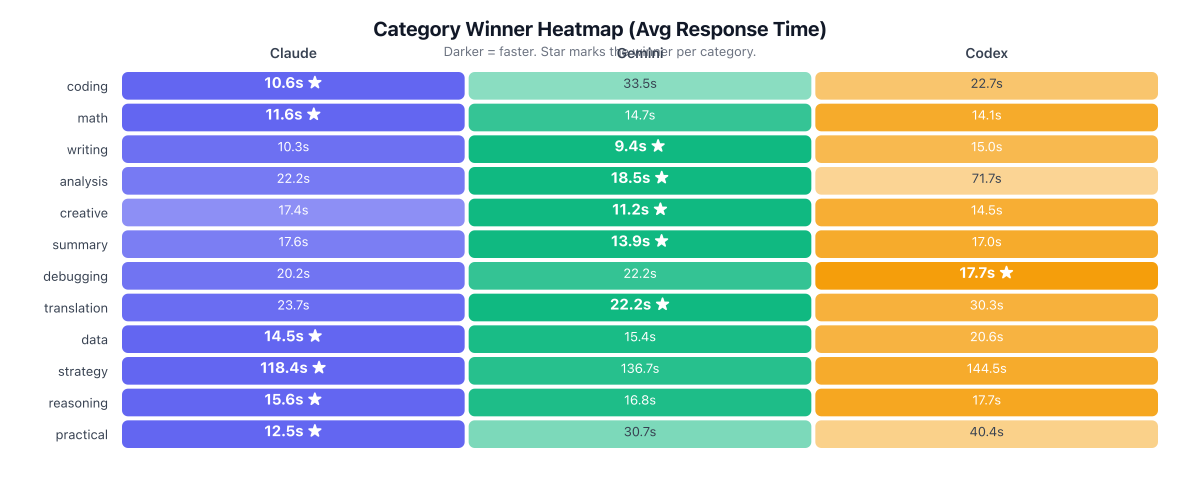

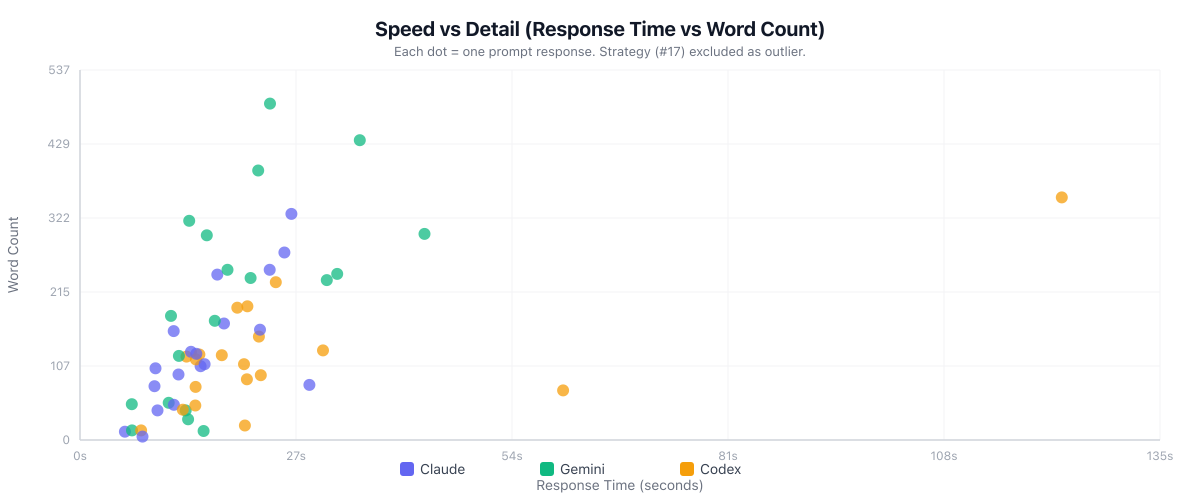

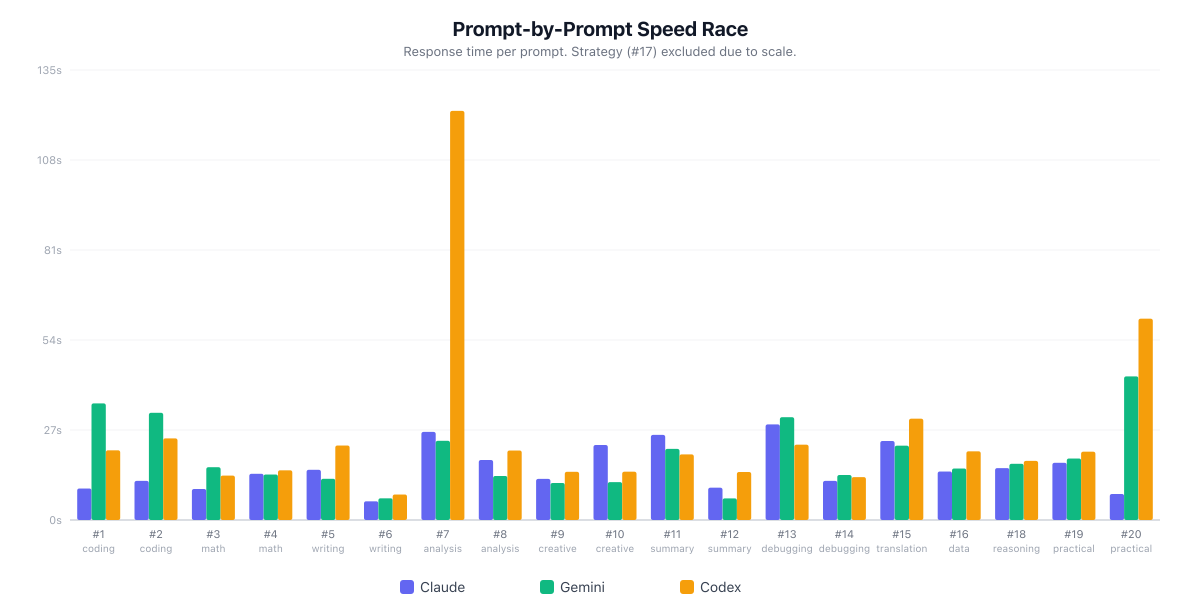

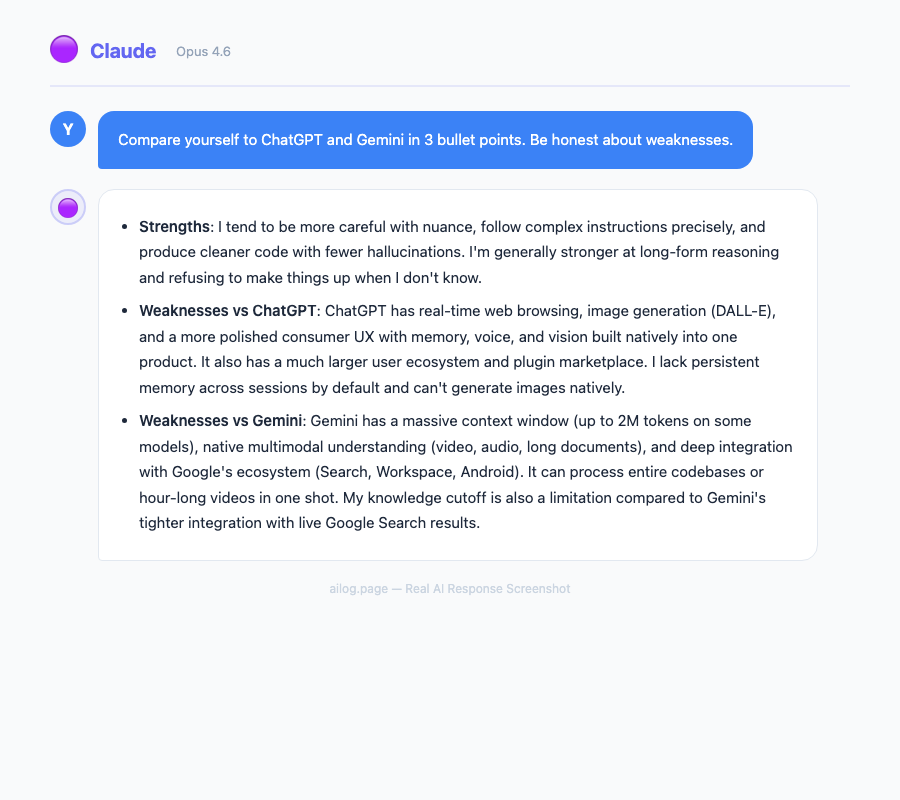

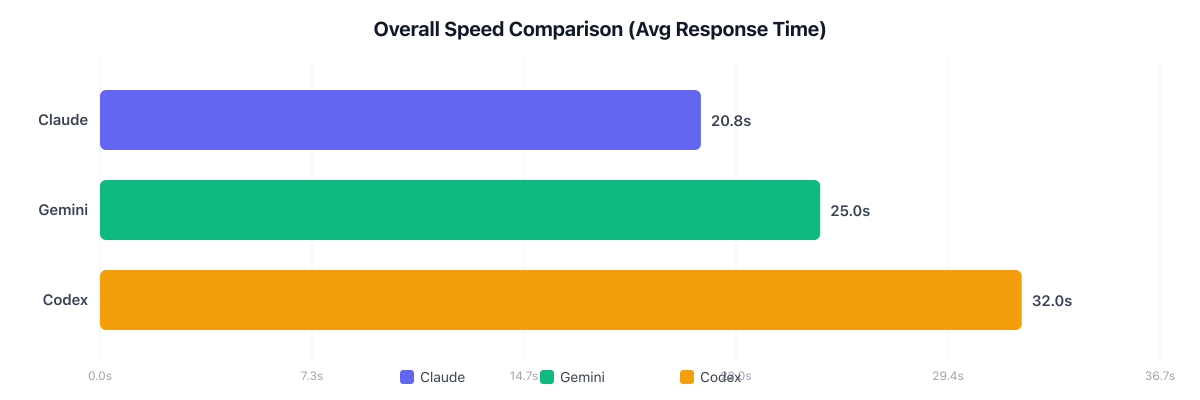

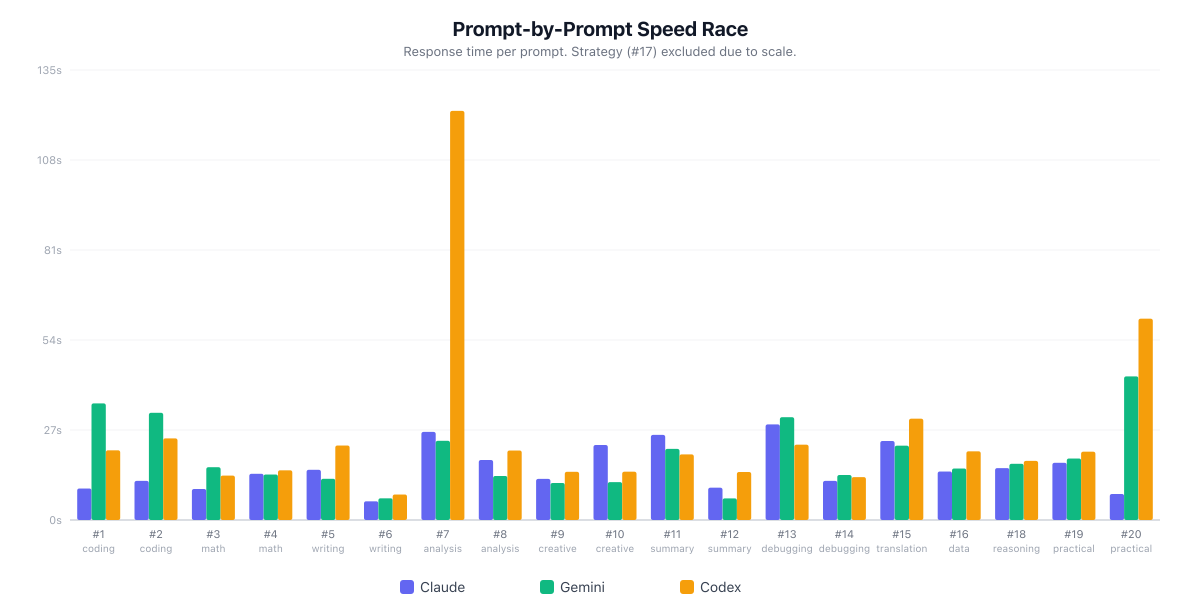

I ran 20 identical prompts across Claude (Opus 4.6), Gemini (3.1 Pro), and ChatGPT (GPT-5.3 via Codex) — covering coding, math, writing, analysis, creative, debugging, and more. Here are the actual results.

Overall Speed

| Model | Avg Speed | Avg Words | Fastest |

|---|---|---|---|

| Claude (Opus 4.6) | 20.8s | 125 | 10/20 (50%) |

| Gemini (3.1 Pro) | 25.0s | 227 | 8/20 (40%) |

| Codex (GPT-5.3) | 32.0s | 138 | 2/20 (10%) |

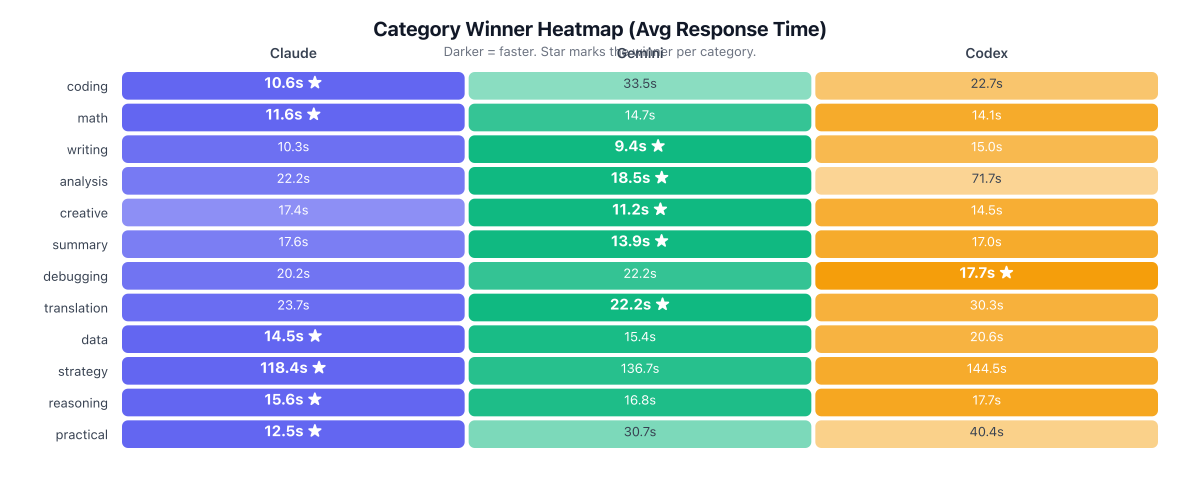

Category Winners

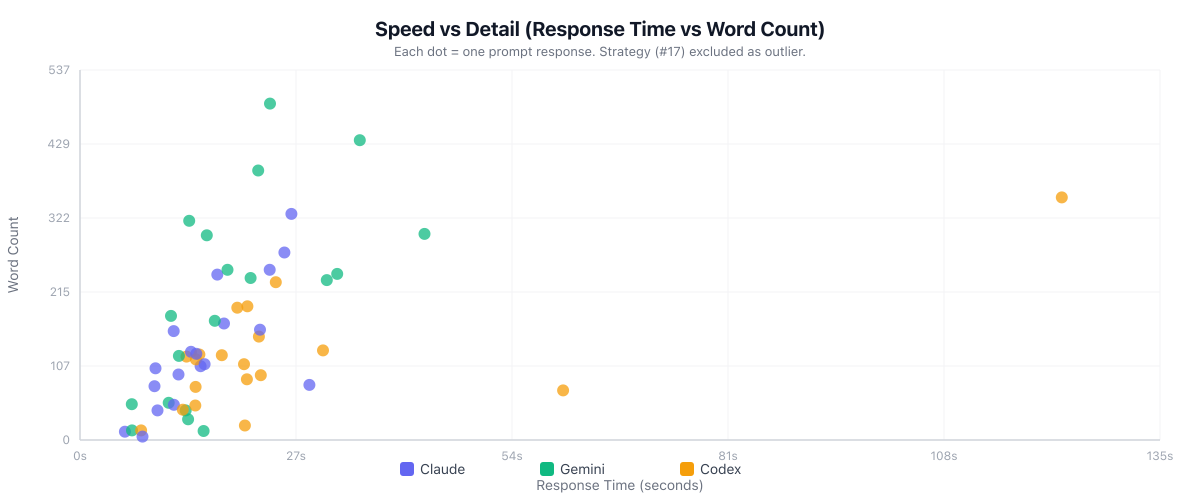

Speed vs Detail

Per-Prompt Race

Benchmark conducted March 15, 2026. Claude CLI (Opus 4.6), Gemini CLI (3.1 Pro), OpenAI Codex CLI (GPT-5.3). Identical prompts, response times include network latency.

Useful Resources

Related Reading

- I Tested GPT-5.4's Computer Use Mode. It Outperformed Me on 3 Out of 5 Tasks.

- Reasoning Models: Why o3, Claude, and Gemini Think Differently

- I Tested DeepSeek, ChatGPT, and Claude Side by Side

- Claude vs ChatGPT for Coding: One Builds Better, the Other Ships Faster

- I Used ChatGPT and Claude to Write the Same Resume. One Got the Interview.

Real AI Responses (Tested March 2026)

Claude for most development tasks — its codebase understanding, error diagnosis, and code quality are consistently ahead. ChatGPT for rapid prototyping and boilerplate. Gemini when working with visual elements, diagrams, or when you need to reference documentation while coding.

Is it hard to switch between models?

Not at all. All three use natural language input, so there's no learning curve when switching. The main adjustment is understanding each model's strengths so you know which to reach for. Most power users develop an instinct within a week of actively using two models.

Sources

- OpenAI: Introducing GPT-5

- 9to5Mac: ChatGPT Approaching 1 Billion Weekly Active Users

- Vellum: GPT-5 Benchmarks

- LLM Stats: GPT-5.2 Launch Details

- Anthropic: Claude Context Windows Documentation

- LMArena: Text Model Leaderboard

- Google: Gemini Long Context Documentation

At the consumer level, all three are priced within a dollar of each other. The real cost difference shows up at the API tier, where Gemini is significantly cheaper for high-volume use. For teams considering alternatives to ChatGPT, Claude's Team tier offers the best combination of capability and data privacy.

Best AI for Each Use Case

Three years ago, picking an AI assistant was simple — you used ChatGPT because it was the only serious option. In March 2026, the ChatGPT vs Claude vs Gemini debate has become genuinely difficult. All three have shipped major upgrades, and each has carved out real strengths that the others can't match.

This article is part of our Claude AI guide. Start there for a complete overview.

I've spent the past month stress-testing all three across writing, coding, reasoning, and multimodal tasks. This isn't a quick comparison chart — it's a detailed breakdown of how each model performs in real-world scenarios, where each one excels, and which combination gives you the most value for your money.

The State of AI in 2026: A Quick Snapshot

Before diving into comparisons, here's the landscape. The AI assistant market has consolidated around three major players, each backed by billions in infrastructure investment. Understanding their different approaches helps explain why they perform differently on the same tasks.

ChatGPT (GPT-5)

OpenAI's flagship remains the most widely used AI model in 2026, with over 900 million weekly active users. GPT-5 brought a major leap in factual accuracy — 45% fewer factual errors compared to GPT-4o, and up to 80% fewer when using thinking mode versus o3. The hallucination rate sits around 1.0-1.4% on grounded benchmarks. With a 400K token context window, it can handle substantial documents. ChatGPT's voice mode continues to be the best conversational AI experience available.

The ChatGPT platform network is also the most mature. The GPT Store has over 3 million custom GPTs, and the plugin platform network means ChatGPT can connect to almost any service. For users who want a single tool that does everything adequately, ChatGPT remains the safest default. If you're new to AI assistants, our beginner's guide to ChatGPT covers setup and basic usage.

Claude (Opus 4.1 & Sonnet 4)

Anthropic's Claude has quietly become the favorite among developers and writers. In blind testing, Claude won the majority of head-to-head rounds against both competitors. The standout spec is the 1 million token context window, the largest of the three. Claude's writing quality is consistently rated the most natural and expressive, and its coding abilities have pulled ahead in several benchmarks.

What separates Claude isn't just raw capability — it's the quality of interaction. Claude pushes back on unclear instructions, asks clarifying questions, and produces output that requires less editing. Anthropic's focus on safety hasn't come at the cost of capability; it's actually made Claude more reliable for professional work. For a deep dive into getting the most out of Claude, see our Claude power user's guide.

Gemini 2.5

Google's Gemini 2.5 topped the LMArena leaderboard for overall capability when it launched. Its defining advantage is native multimodal processing. It can process up to 2 hours of video or 19 hours of audio in a single prompt. The 1M token context window matches Claude, with 2M available via API.

Gemini's killer feature is Google Workspace integration. If your organization runs on Gmail, Docs, Sheets, and Slides, Gemini can pull context from all of them simultaneously. It understands your calendar, reads your emails, and can reference your documents — all within one conversation. No other AI assistant offers this level of integration with productivity tools. We covered Gemini extensively in our 30-day Gemini review.

Head-to-Head: Writing Quality

Claude wins this category decisively. In blind comparison tests, it took the "Writer" title with prose that reads like it was written by a human who actually enjoys writing. Claude's output is witty, varied in sentence structure, and emotionally intelligent.

Here's what that looks like in practice. I asked all three to write an opening paragraph for a tech review:

- Claude: Produced a conversational hook with a specific anecdote, varied sentence lengths, and a tone that matched the brief precisely. The output needed zero editing.

- ChatGPT: Delivered a competent but formulaic opening. Factually solid, well-structured, but the voice was "corporate neutral" — the kind of text that's correct without being interesting.

- Gemini: Fell somewhere in the middle. Serviceable but inconsistent — some paragraphs were sharp, others felt like they were written by a different model entirely.

For content creators, marketers, and anyone who writes professionally, Claude's advantage here isn't marginal. It's the difference between output you publish directly and output you spend 20 minutes rewriting. Our guide on writing faster with AI covers practical techniques for getting better output from any model.

Head-to-Head: Coding

Claude is the developer's choice in 2026. It excels at understanding large codebases, generating clean and idiomatic code, and explaining its reasoning step by step. Where other models produce code that works, Claude produces code that a senior developer would approve in a code review.

Key differences I observed across 50+ coding tasks:

- Context handling: Claude's 1M token window means it can hold an entire medium-sized codebase in context. ChatGPT's 400K and Gemini's 1M match on paper, but Claude's retrieval within that window is more accurate.

- Error recovery: When given buggy code, Claude identifies root causes faster and suggests fixes that address the underlying issue rather than just the symptom.

- Documentation: Claude generates meaningful inline comments and docstrings without being asked. ChatGPT's documentation tends to be verbose but superficial.

- Multi-file edits: Claude tracks dependencies across files better than either competitor, reducing the "fix one thing, break another" cycle.

ChatGPT remains capable for common patterns and boilerplate. Gemini shines specifically when code involves diagrams, screenshots, or visual elements — its multimodal understanding translates directly to better UI code generation. For a practical comparison of AI coding tools, see our Copilot vs Cursor vs Claude Code breakdown.

Head-to-Head: Reasoning & Analysis

ChatGPT edges ahead here. GPT-5's chain-of-thought reasoning is methodical, particularly for business strategy and financial analysis. When asked to evaluate a market opportunity or assess risk, ChatGPT produces the most structured and thorough analysis.

The reasoning comparison across three test categories:

- Mathematical reasoning: All three handle standard math well. For complex multi-step problems, ChatGPT's thinking mode produces the most transparent step-by-step breakdowns.

- Logical deduction: Claude outperforms on problems requiring ethical nuance or handling ambiguity. It's better at saying "there isn't enough information to conclude X" rather than forcing an answer.

- Strategic thinking: Gemini tops the LMArena leaderboard overall and excels at synthesizing information from multiple sources, especially when those sources include non-text media.

For understanding how these reasoning capabilities work under the hood, our reasoning models deep dive explains the architectural differences that drive these results.

Head-to-Head: Multimodal Capabilities

Gemini 2.5 is the undisputed winner here, and it's not close. Google's native multimodal approach — training on text, images, audio, and video from the ground up — gives it capabilities the others can't replicate by bolting on vision or audio processing after the fact.

- Video analysis: Upload up to 2 hours of video for summarization, Q&A, and timeline-specific queries. Gemini can find specific moments, transcribe dialogue, and analyze visual content simultaneously.

- Audio processing: 19 hours of audio in a single prompt. Podcast transcription, meeting summary, music analysis — all handled natively.

- Image understanding: Superior spatial relationships and text-in-image recognition. Gemini reads handwritten notes, understands architectural drawings, and parses complex infographics better than either competitor.

- Mixed media: Combine text, images, audio, and video in one conversation. Ask "what did the speaker say about the chart shown at the 14-minute mark" and get a coherent answer.

Claude has improved its image understanding significantly, but it still doesn't process video or audio natively. ChatGPT added video analysis in late 2025, but the quality gap with Gemini remains substantial. For more on this evolving space, see our coverage of multimodal AI's quiet revolution.

Safety and Privacy Comparison

This is where the three companies diverge most sharply in philosophy:

- Anthropic (Claude): Constitutional AI approach. Claude is trained to be helpful, harmless, and honest. It's the most likely to refuse potentially harmful requests, which some users find restrictive but others appreciate for professional settings. Data retention is minimal — conversations aren't used for training by default.

- OpenAI (ChatGPT): Conversations are used for training unless you opt out or use the API. Enterprise and Team tiers have stronger data isolation. OpenAI has been most aggressive about expanding capabilities, sometimes at the expense of safety guardrails.

- Google (Gemini): Google's data practices are the most opaque. Gemini conversations may be reviewed by humans and used to improve products. For enterprise users, Google Cloud's data handling agreements provide stronger protections. The integration with Google Workspace means more of your data is potentially accessible.

For a thorough analysis of what each company does with your conversations, read our investigation on ChatGPT data safety.

Pricing Comparison: March 2026

| Tier | ChatGPT | Claude | Gemini |

|---|---|---|---|

| Free | GPT-5 (limited) | Sonnet 4 (limited) | Gemini 2.5 (limited) |

| Pro/Plus | $20/mo | $20/mo | $19.99/mo |

| Team | $25/user/mo | $25/user/mo | Included in Workspace |

| Enterprise | Custom | Custom | Custom |

| API (1M input tokens) | $2.50 | $3.00 | $1.25 |

At the consumer level, all three are priced within a dollar of each other. The real cost difference shows up at the API tier, where Gemini is significantly cheaper for high-volume use. For teams considering alternatives to ChatGPT, Claude's Team tier offers the best combination of capability and data privacy.

Best AI for Each Use Case

| Use Case | Best Model | Why |

|---|---|---|

| Writing & Content | Claude | Most natural prose, varied tone, minimal editing needed |

| Coding | Claude | Largest effective context, clean code output, best error diagnosis |

| Business Strategy | ChatGPT | Methodical reasoning, lowest hallucination rate, structured output |

| Multimedia | Gemini | Native video/audio/image processing, 2hrs video per prompt |

| Research | Gemini | Web grounding, source citations, Google Scholar integration |

| Google Workspace | Gemini | Direct integration with Gmail, Docs, Sheets, Calendar |

| Daily Personal Use | ChatGPT | Broadest plugin platform network, voice mode, most polished UX |

| Privacy-Sensitive Work | Claude | Minimal data retention, strongest privacy defaults |

The Model Routing Strategy

The most productive AI users in 2026 don't pick just one. "Model routing" — using different AI models for different tasks — has become the defining workflow strategy. Rather than forcing one model to handle everything, you match the model to the task:

- Claude for writing, coding, and tasks requiring nuanced understanding

- ChatGPT for fact-checking, business analysis, and voice conversations

- Gemini for multimedia, research, and Google Workspace integration

At $40/month for two subscriptions, it's a fraction of the cost of most professional software. For teams that have already adopted this approach with automation tools, our guide on AI workflows with Zapier and Make shows how to route tasks programmatically.

Final Verdict

If I had to recommend just one: Claude for most individual users. Best writing, strongest coding, largest effective context window, and the most thoughtful approach to safety.

For business users: ChatGPT if your priority is the broadest platform network and lowest hallucination rate. Gemini if your organization is deep in Google Workspace.

But the real answer: choose two if your budget allows. The model routing approach delivers meaningfully better results than any single model. The gap between using one AI well and using two AI models strategically is larger than the gap between any two individual models.

Frequently Asked Questions

Which AI model is the best overall in 2026?

There's no single "best." Claude leads in writing and coding quality, ChatGPT has the lowest hallucination rate and broadest platform network, and Gemini dominates multimodal tasks. The optimal approach is using two models for different task types.

Are the free tiers good enough for daily use?

All three offer capable free tiers, but with significant limitations — rate limits, reduced model access, and shorter context windows. For professional work, the $20/month paid tier of any of them is worth it. For casual use, rotating between the three free tiers gives you reasonable coverage.

Which one is safest for sensitive data?

Claude has the strongest privacy defaults — conversations aren't used for training. ChatGPT offers opt-out for training data. Gemini's data practices are the least transparent for free-tier users. For enterprise, all three offer data isolation agreements, but Claude's terms are the most straightforward.

Which one should developers use?

Original Benchmark: 20 Prompts, 3 Models, Raw Data

I ran 20 identical prompts across Claude (Opus 4.6), Gemini (3.1 Pro), and ChatGPT (GPT-5.3 via Codex) — covering coding, math, writing, analysis, creative, debugging, and more. Here are the actual results.

Overall Speed

| Model | Avg Speed | Avg Words | Fastest |

|---|---|---|---|

| Claude (Opus 4.6) | 20.8s | 125 | 10/20 (50%) |

| Gemini (3.1 Pro) | 25.0s | 227 | 8/20 (40%) |

| Codex (GPT-5.3) | 32.0s | 138 | 2/20 (10%) |

Category Winners

Speed vs Detail

Per-Prompt Race

Benchmark conducted March 15, 2026. Claude CLI (Opus 4.6), Gemini CLI (3.1 Pro), OpenAI Codex CLI (GPT-5.3). Identical prompts, response times include network latency.

Useful Resources

Related Reading

- I Tested GPT-5.4's Computer Use Mode. It Outperformed Me on 3 Out of 5 Tasks.

- Reasoning Models: Why o3, Claude, and Gemini Think Differently

- I Tested DeepSeek, ChatGPT, and Claude Side by Side

- Claude vs ChatGPT for Coding: One Builds Better, the Other Ships Faster

- I Used ChatGPT and Claude to Write the Same Resume. One Got the Interview.

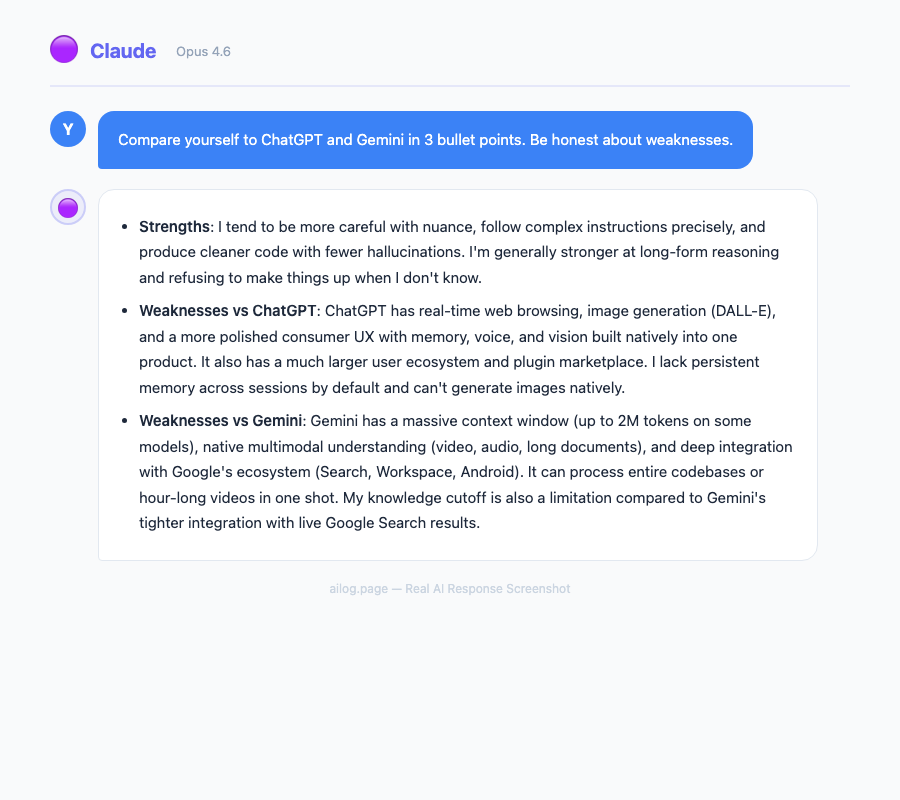

Real AI Responses (Tested March 2026)

Claude for most development tasks — its codebase understanding, error diagnosis, and code quality are consistently ahead. ChatGPT for rapid prototyping and boilerplate. Gemini when working with visual elements, diagrams, or when you need to reference documentation while coding.

Is it hard to switch between models?

Not at all. All three use natural language input, so there's no learning curve when switching. The main adjustment is understanding each model's strengths so you know which to reach for. Most power users develop an instinct within a week of actively using two models.

Sources

- OpenAI: Introducing GPT-5

- 9to5Mac: ChatGPT Approaching 1 Billion Weekly Active Users

- Vellum: GPT-5 Benchmarks

- LLM Stats: GPT-5.2 Launch Details

- Anthropic: Claude Context Windows Documentation

- LMArena: Text Model Leaderboard

- Google: Gemini Long Context Documentation

The Model Routing Strategy

The most productive AI users in 2026 don't pick just one. "Model routing" — using different AI models for different tasks — has become the defining workflow strategy. Rather than forcing one model to handle everything, you match the model to the task:

- Claude for writing, coding, and tasks requiring nuanced understanding

- ChatGPT for fact-checking, business analysis, and voice conversations

- Gemini for multimedia, research, and Google Workspace integration

At $40/month for two subscriptions, it's a fraction of the cost of most professional software. For teams that have already adopted this approach with automation tools, our guide on AI workflows with Zapier and Make shows how to route tasks programmatically.

Final Verdict

If I had to recommend just one: Claude for most individual users. Best writing, strongest coding, largest effective context window, and the most thoughtful approach to safety.

For business users: ChatGPT if your priority is the broadest platform network and lowest hallucination rate. Gemini if your organization is deep in Google Workspace.

But the real answer: choose two if your budget allows. The model routing approach delivers meaningfully better results than any single model. The gap between using one AI well and using two AI models strategically is larger than the gap between any two individual models.

Frequently Asked Questions

Which AI model is the best overall in 2026?

There's no single "best." Claude leads in writing and coding quality, ChatGPT has the lowest hallucination rate and broadest platform network, and Gemini dominates multimodal tasks. The optimal approach is using two models for different task types.

Are the free tiers good enough for daily use?

All three offer capable free tiers, but with significant limitations — rate limits, reduced model access, and shorter context windows. For professional work, the $20/month paid tier of any of them is worth it. For casual use, rotating between the three free tiers gives you reasonable coverage.

Which one is safest for sensitive data?

Claude has the strongest privacy defaults — conversations aren't used for training. ChatGPT offers opt-out for training data. Gemini's data practices are the least transparent for free-tier users. For enterprise, all three offer data isolation agreements, but Claude's terms are the most straightforward.

Which one should developers use?

Three years ago, picking an AI assistant was simple — you used ChatGPT because it was the only serious option. In March 2026, the ChatGPT vs Claude vs Gemini debate has become genuinely difficult. All three have shipped major upgrades, and each has carved out real strengths that the others can't match.

This article is part of our Claude AI guide. Start there for a complete overview.

I've spent the past month stress-testing all three across writing, coding, reasoning, and multimodal tasks. This isn't a quick comparison chart — it's a detailed breakdown of how each model performs in real-world scenarios, where each one excels, and which combination gives you the most value for your money.

The State of AI in 2026: A Quick Snapshot

Before diving into comparisons, here's the landscape. The AI assistant market has consolidated around three major players, each backed by billions in infrastructure investment. Understanding their different approaches helps explain why they perform differently on the same tasks.

ChatGPT (GPT-5)

OpenAI's flagship remains the most widely used AI model in 2026, with over 900 million weekly active users. GPT-5 brought a major leap in factual accuracy — 45% fewer factual errors compared to GPT-4o, and up to 80% fewer when using thinking mode versus o3. The hallucination rate sits around 1.0-1.4% on grounded benchmarks. With a 400K token context window, it can handle substantial documents. ChatGPT's voice mode continues to be the best conversational AI experience available.

The ChatGPT platform network is also the most mature. The GPT Store has over 3 million custom GPTs, and the plugin platform network means ChatGPT can connect to almost any service. For users who want a single tool that does everything adequately, ChatGPT remains the safest default. If you're new to AI assistants, our beginner's guide to ChatGPT covers setup and basic usage.

Claude (Opus 4.1 & Sonnet 4)

Anthropic's Claude has quietly become the favorite among developers and writers. In blind testing, Claude won the majority of head-to-head rounds against both competitors. The standout spec is the 1 million token context window, the largest of the three. Claude's writing quality is consistently rated the most natural and expressive, and its coding abilities have pulled ahead in several benchmarks.

What separates Claude isn't just raw capability — it's the quality of interaction. Claude pushes back on unclear instructions, asks clarifying questions, and produces output that requires less editing. Anthropic's focus on safety hasn't come at the cost of capability; it's actually made Claude more reliable for professional work. For a deep dive into getting the most out of Claude, see our Claude power user's guide.

Gemini 2.5

Google's Gemini 2.5 topped the LMArena leaderboard for overall capability when it launched. Its defining advantage is native multimodal processing. It can process up to 2 hours of video or 19 hours of audio in a single prompt. The 1M token context window matches Claude, with 2M available via API.

Gemini's killer feature is Google Workspace integration. If your organization runs on Gmail, Docs, Sheets, and Slides, Gemini can pull context from all of them simultaneously. It understands your calendar, reads your emails, and can reference your documents — all within one conversation. No other AI assistant offers this level of integration with productivity tools. We covered Gemini extensively in our 30-day Gemini review.

Head-to-Head: Writing Quality

Claude wins this category decisively. In blind comparison tests, it took the "Writer" title with prose that reads like it was written by a human who actually enjoys writing. Claude's output is witty, varied in sentence structure, and emotionally intelligent.

Here's what that looks like in practice. I asked all three to write an opening paragraph for a tech review:

- Claude: Produced a conversational hook with a specific anecdote, varied sentence lengths, and a tone that matched the brief precisely. The output needed zero editing.

- ChatGPT: Delivered a competent but formulaic opening. Factually solid, well-structured, but the voice was "corporate neutral" — the kind of text that's correct without being interesting.

- Gemini: Fell somewhere in the middle. Serviceable but inconsistent — some paragraphs were sharp, others felt like they were written by a different model entirely.

For content creators, marketers, and anyone who writes professionally, Claude's advantage here isn't marginal. It's the difference between output you publish directly and output you spend 20 minutes rewriting. Our guide on writing faster with AI covers practical techniques for getting better output from any model.

Head-to-Head: Coding

Claude is the developer's choice in 2026. It excels at understanding large codebases, generating clean and idiomatic code, and explaining its reasoning step by step. Where other models produce code that works, Claude produces code that a senior developer would approve in a code review.

Key differences I observed across 50+ coding tasks:

- Context handling: Claude's 1M token window means it can hold an entire medium-sized codebase in context. ChatGPT's 400K and Gemini's 1M match on paper, but Claude's retrieval within that window is more accurate.

- Error recovery: When given buggy code, Claude identifies root causes faster and suggests fixes that address the underlying issue rather than just the symptom.

- Documentation: Claude generates meaningful inline comments and docstrings without being asked. ChatGPT's documentation tends to be verbose but superficial.

- Multi-file edits: Claude tracks dependencies across files better than either competitor, reducing the "fix one thing, break another" cycle.

ChatGPT remains capable for common patterns and boilerplate. Gemini shines specifically when code involves diagrams, screenshots, or visual elements — its multimodal understanding translates directly to better UI code generation. For a practical comparison of AI coding tools, see our Copilot vs Cursor vs Claude Code breakdown.

Head-to-Head: Reasoning & Analysis

ChatGPT edges ahead here. GPT-5's chain-of-thought reasoning is methodical, particularly for business strategy and financial analysis. When asked to evaluate a market opportunity or assess risk, ChatGPT produces the most structured and thorough analysis.

The reasoning comparison across three test categories:

- Mathematical reasoning: All three handle standard math well. For complex multi-step problems, ChatGPT's thinking mode produces the most transparent step-by-step breakdowns.

- Logical deduction: Claude outperforms on problems requiring ethical nuance or handling ambiguity. It's better at saying "there isn't enough information to conclude X" rather than forcing an answer.

- Strategic thinking: Gemini tops the LMArena leaderboard overall and excels at synthesizing information from multiple sources, especially when those sources include non-text media.

For understanding how these reasoning capabilities work under the hood, our reasoning models deep dive explains the architectural differences that drive these results.

Head-to-Head: Multimodal Capabilities

Gemini 2.5 is the undisputed winner here, and it's not close. Google's native multimodal approach — training on text, images, audio, and video from the ground up — gives it capabilities the others can't replicate by bolting on vision or audio processing after the fact.

- Video analysis: Upload up to 2 hours of video for summarization, Q&A, and timeline-specific queries. Gemini can find specific moments, transcribe dialogue, and analyze visual content simultaneously.

- Audio processing: 19 hours of audio in a single prompt. Podcast transcription, meeting summary, music analysis — all handled natively.

- Image understanding: Superior spatial relationships and text-in-image recognition. Gemini reads handwritten notes, understands architectural drawings, and parses complex infographics better than either competitor.

- Mixed media: Combine text, images, audio, and video in one conversation. Ask "what did the speaker say about the chart shown at the 14-minute mark" and get a coherent answer.

Claude has improved its image understanding significantly, but it still doesn't process video or audio natively. ChatGPT added video analysis in late 2025, but the quality gap with Gemini remains substantial. For more on this evolving space, see our coverage of multimodal AI's quiet revolution.

Safety and Privacy Comparison

This is where the three companies diverge most sharply in philosophy:

- Anthropic (Claude): Constitutional AI approach. Claude is trained to be helpful, harmless, and honest. It's the most likely to refuse potentially harmful requests, which some users find restrictive but others appreciate for professional settings. Data retention is minimal — conversations aren't used for training by default.

- OpenAI (ChatGPT): Conversations are used for training unless you opt out or use the API. Enterprise and Team tiers have stronger data isolation. OpenAI has been most aggressive about expanding capabilities, sometimes at the expense of safety guardrails.

- Google (Gemini): Google's data practices are the most opaque. Gemini conversations may be reviewed by humans and used to improve products. For enterprise users, Google Cloud's data handling agreements provide stronger protections. The integration with Google Workspace means more of your data is potentially accessible.

For a thorough analysis of what each company does with your conversations, read our investigation on ChatGPT data safety.

Pricing Comparison: March 2026

| Tier | ChatGPT | Claude | Gemini |

|---|---|---|---|

| Free | GPT-5 (limited) | Sonnet 4 (limited) | Gemini 2.5 (limited) |

| Pro/Plus | $20/mo | $20/mo | $19.99/mo |

| Team | $25/user/mo | $25/user/mo | Included in Workspace |

| Enterprise | Custom | Custom | Custom |

| API (1M input tokens) | $2.50 | $3.00 | $1.25 |

At the consumer level, all three are priced within a dollar of each other. The real cost difference shows up at the API tier, where Gemini is significantly cheaper for high-volume use. For teams considering alternatives to ChatGPT, Claude's Team tier offers the best combination of capability and data privacy.

Best AI for Each Use Case

| Use Case | Best Model | Why |

|---|---|---|

| Writing & Content | Claude | Most natural prose, varied tone, minimal editing needed |

| Coding | Claude | Largest effective context, clean code output, best error diagnosis |

| Business Strategy | ChatGPT | Methodical reasoning, lowest hallucination rate, structured output |

| Multimedia | Gemini | Native video/audio/image processing, 2hrs video per prompt |

| Research | Gemini | Web grounding, source citations, Google Scholar integration |

| Google Workspace | Gemini | Direct integration with Gmail, Docs, Sheets, Calendar |

| Daily Personal Use | ChatGPT | Broadest plugin platform network, voice mode, most polished UX |

| Privacy-Sensitive Work | Claude | Minimal data retention, strongest privacy defaults |

The Model Routing Strategy

The most productive AI users in 2026 don't pick just one. "Model routing" — using different AI models for different tasks — has become the defining workflow strategy. Rather than forcing one model to handle everything, you match the model to the task:

- Claude for writing, coding, and tasks requiring nuanced understanding

- ChatGPT for fact-checking, business analysis, and voice conversations

- Gemini for multimedia, research, and Google Workspace integration

At $40/month for two subscriptions, it's a fraction of the cost of most professional software. For teams that have already adopted this approach with automation tools, our guide on AI workflows with Zapier and Make shows how to route tasks programmatically.

Final Verdict

If I had to recommend just one: Claude for most individual users. Best writing, strongest coding, largest effective context window, and the most thoughtful approach to safety.

For business users: ChatGPT if your priority is the broadest platform network and lowest hallucination rate. Gemini if your organization is deep in Google Workspace.

But the real answer: choose two if your budget allows. The model routing approach delivers meaningfully better results than any single model. The gap between using one AI well and using two AI models strategically is larger than the gap between any two individual models.

Frequently Asked Questions

Which AI model is the best overall in 2026?

There's no single "best." Claude leads in writing and coding quality, ChatGPT has the lowest hallucination rate and broadest platform network, and Gemini dominates multimodal tasks. The optimal approach is using two models for different task types.

Are the free tiers good enough for daily use?

All three offer capable free tiers, but with significant limitations — rate limits, reduced model access, and shorter context windows. For professional work, the $20/month paid tier of any of them is worth it. For casual use, rotating between the three free tiers gives you reasonable coverage.

Which one is safest for sensitive data?

Claude has the strongest privacy defaults — conversations aren't used for training. ChatGPT offers opt-out for training data. Gemini's data practices are the least transparent for free-tier users. For enterprise, all three offer data isolation agreements, but Claude's terms are the most straightforward.

Which one should developers use?

Original Benchmark: 20 Prompts, 3 Models, Raw Data

I ran 20 identical prompts across Claude (Opus 4.6), Gemini (3.1 Pro), and ChatGPT (GPT-5.3 via Codex) — covering coding, math, writing, analysis, creative, debugging, and more. Here are the actual results.

Overall Speed

| Model | Avg Speed | Avg Words | Fastest |

|---|---|---|---|

| Claude (Opus 4.6) | 20.8s | 125 | 10/20 (50%) |

| Gemini (3.1 Pro) | 25.0s | 227 | 8/20 (40%) |

| Codex (GPT-5.3) | 32.0s | 138 | 2/20 (10%) |

Category Winners

Speed vs Detail

Per-Prompt Race

Benchmark conducted March 15, 2026. Claude CLI (Opus 4.6), Gemini CLI (3.1 Pro), OpenAI Codex CLI (GPT-5.3). Identical prompts, response times include network latency.

Useful Resources

Related Reading

- I Tested GPT-5.4's Computer Use Mode. It Outperformed Me on 3 Out of 5 Tasks.

- Reasoning Models: Why o3, Claude, and Gemini Think Differently

- I Tested DeepSeek, ChatGPT, and Claude Side by Side

- Claude vs ChatGPT for Coding: One Builds Better, the Other Ships Faster

- I Used ChatGPT and Claude to Write the Same Resume. One Got the Interview.

Real AI Responses (Tested March 2026)

Claude for most development tasks — its codebase understanding, error diagnosis, and code quality are consistently ahead. ChatGPT for rapid prototyping and boilerplate. Gemini when working with visual elements, diagrams, or when you need to reference documentation while coding.

Is it hard to switch between models?

Not at all. All three use natural language input, so there's no learning curve when switching. The main adjustment is understanding each model's strengths so you know which to reach for. Most power users develop an instinct within a week of actively using two models.

Sources

- OpenAI: Introducing GPT-5

- 9to5Mac: ChatGPT Approaching 1 Billion Weekly Active Users

- Vellum: GPT-5 Benchmarks

- LLM Stats: GPT-5.2 Launch Details

- Anthropic: Claude Context Windows Documentation

- LMArena: Text Model Leaderboard

- Google: Gemini Long Context Documentation

Claude for most development tasks — its codebase understanding, error diagnosis, and code quality are consistently ahead. ChatGPT for rapid prototyping and boilerplate. Gemini when working with visual elements, diagrams, or when you need to reference documentation while coding.

Is it hard to switch between models?

Not at all. All three use natural language input, so there's no learning curve when switching. The main adjustment is understanding each model's strengths so you know which to reach for. Most power users develop an instinct within a week of actively using two models.